Nvidia has officially unveiled its first mid-range Ada Lovelace graphics card, the RTX 4060 Ti, with both 8GB and 16GB variants at $399/£389 and $499 respectively. The 8GB card is the first to be released, with sales beginning on May 24th, while the 16GB variant debuts in July. The firm also detailed its mainstream RTX 4060 GPU, also coming in July, to debut at $299.

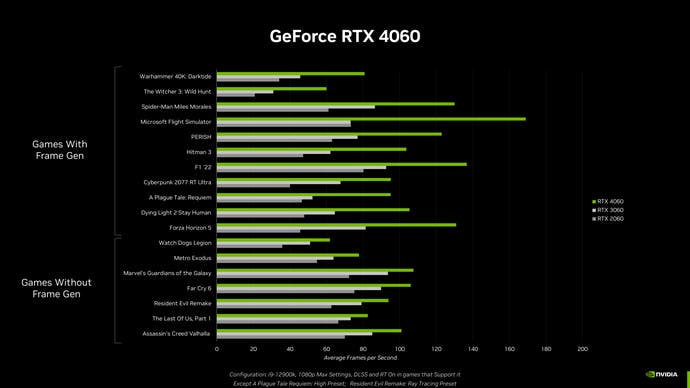

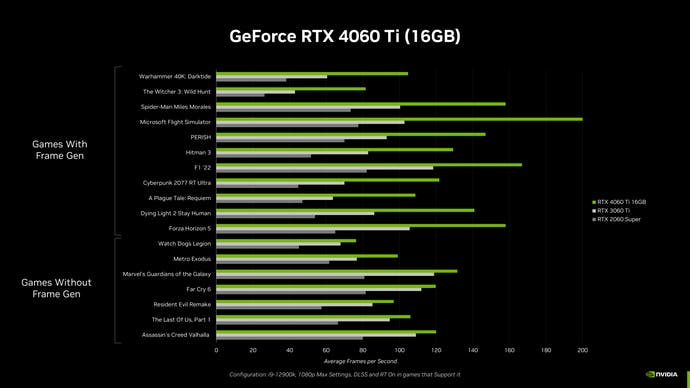

All three models ought to offer a reasonable boost in performance over their predecessors, while also adding DLSS 3 frame generation – which is perhaps more impactful on mid-range hardware than it is on the likes of the RTX 4090 which is powerful enough without it. That should allow the 4060 family to be a reasonable choice for gaming at 1080p with maxed settings (including RT / DLSS), or at 1440p with some settings reductions.

On average, Nvidia is claiming the 4060 Ti is 1.15x faster than the 3060 Ti and 1.6x faster than the 2060 Super (1.7x and 2.6x faster with DLSS 3). Similarly, the RTX 4060 is 1.2x faster than RTX 3060 and 1.6x faster than RTX 2060 (1.7x and 2.3x with DLSS 3).

For now, let’s focus on the RTX 4060 Ti. It’s quite unusual to see multiple variants of a single GPU launched together, although Nvidia did try to pull this off with the RTX 4080 last year. That card was announced with 16GB and 12GB SKUs, each with substantially different specifications, before the firm ‘unlaunched’ the 12GB model, later relabelling it as the RTX 4070 Ti.

This time, the two RTX 4060 Ti cards appear identical apart from their VRAM allocation, with identical core counts (4352), memory bandwidth (554GB/s effective), power usage (160W TDP) and so on. That’s less confusing than the RTX 4080 situation, but paying $100 extra for a 16GB model may rub consumers the wrong way given the recent run of PC port releases that launched with disastrous performance on 8GB cards. Still, launching just an 8GB card would play into AMD’s recent marketing of its VRAM advantage, so it’s easy to see why Nvidia has ended up here.

We’ll have to test both 4060 Ti models before we have a good idea of their performance profiles, but if the RTX 4070 12GB is able to deliver circa 30 percent more powerful at $100 more than the 4060 Ti 16GB, that leaves the 16GB card in a bit of a weird place.

| Nvidia RTX | 4060 Ti | 4060 | 3060 Ti | 3060 | 2060S | 2060 |

|---|---|---|---|---|---|---|

| Shaders (TF) | 22 | 15 | 16 | 13 | 7 | 7 |

| RT cores (TF) | 51 | 35 | 32 | 25 | 22 | 20 |

| Tensor cores (TF) | 353 | 242 | 130 | 102 | 57 | 52 |

| VRAM | 8GB/16GB | 8GB | 8GB | 12GB | 8GB | 6GB |

| L2 cache | 32MB | 24MB | 4MB | 3MB | 4MB | 3MB |

| Memory bandwidth (effective) | 288GB/s (554GB/s) |

272GB/s (453GB/s) |

448GB/s | 360GB/s | 448GB/s | 336GB/s |

| TDP | 160W | 110W | 200W | 170W | 175W | 138W |

| Price | $399/$499 | TBA | $399 | $329 | $399 | $349 |

Unusually, rather than including details on exact core counts, Nvidia opted to provide teraflop (TF) figures for each card’s shaders, RT cores and Tensor cores (as shown in the table above).

It’s interesting to see that the $299 4060 is actually slightly cheaper than the last-gen 3060, which debuted at $329 and therefore lost out in the value stakes versus the significantly faster 3060 Ti at $399. With an approximately 30 to 40 percent increase in core count, memory bandwidth and TDP from the 4060 to 4060 Ti, a $299 price point should make the two mainstream 40-series cards much closer in terms of frames per dollar.

However, you’re also going from 12GB of VRAM on the 3060 to 8GB on the 4060, a choice necessitated by the reduction in memory bus size from 192-bit to 128-bit. (This isn’t obvious, but with each memory die connected via an eight-bit interface, a 128-bit bus means you can have 16 dies connected. If each die is 0.5GB, you get 8GB of VRAM in total; if each die is 1GB, you get 16GB. On a 192-bit interface, your choices are 6GB, 12GB or 24GB.) Again, it’s possible to see why Nvidia has opted for 8GB on their mainstream GPU – but this is still likely to prove a contentious decision.

Overall then, it’s great to see the first properly mid-range graphics cards announced this generation – if we don’t count Intel’s Arc A750 and A770 – but we’ll have to see how these GPUs shake out both in terms of outright performance and market positioning before we can pass judgement. There’s certainly potential here for a messy release, so buckle up.

Be the first to comment